I wouldn't immediately blame the power level as you have measured wavelengths from 405nm all the way up to 808nm. This could also be part of the problem with this meter. What was used as the meter that was used as the standard? How well does it measure these powers at these different wavelengths and what is its tolerance? There are too many variables here to blame it all on one thing. It might be better to use a single wavelength at different power levels first, then look at other wavelengths next.

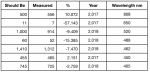

I don't have the meter, but I just copied the data fro two other people who have the Pocket Power Meter. I assume that the Tornado Laser Power Meter is based on the same circuit that the Pocket Power Meter is based on. The data I can look at from the two different tests done by two different people is VERY limited. So, yes I'm guessing as to what might be the cause. If I sort the data by wavelength I see the following:

You can make a case that because these are two different meters, with two different sensor coatings, the data is questionable at best. I'd agree, but it's the only data I have. Still, I think I can safely say that the reading might be okay for a "Ballpark Reading", but it isn't really that accurate. By July of 2019, I'll have the Laserbee Meter and if all goes well, get the Tornado Laser Power Meter. At that point I could run some more reasonable tests.